Why Is AI Driving a Memory Shortage?

AI is driving a memory shortage because modern AI systems require massive amounts of high-speed memory to process and train large datasets efficiently.

Unlike traditional computing, AI workloads demand:

- Massive parallel processing

- High-speed data access

- Continuous memory availability

This has shifted global demand almost overnight.

Key Memory Manufacturers Dominating the Market

The global memory supply is controlled by a small number of major manufacturers:

1. Samsung

- Largest DRAM manufacturer globally

- Leading in both DDR5 and HBM production

- Heavy focus on AI-driven memory solutions

2. SK Hynix

- Major supplier of High Bandwidth Memory (HBM)

- Key partner in AI GPU ecosystems

- Rapidly expanding production for AI infrastructure

3. Micron Technology

- Strong presence in enterprise and data center memory

- Increasing investment in AI-focused DRAM

Why This Matters

Because only a few manufacturers control global supply, shifts toward AI production directly reduce availability of traditional server memory.

Manufacturers are prioritizing:

- Higher-margin AI memory

- Long-term contracts with hyperscalers

- Advanced memory technologies

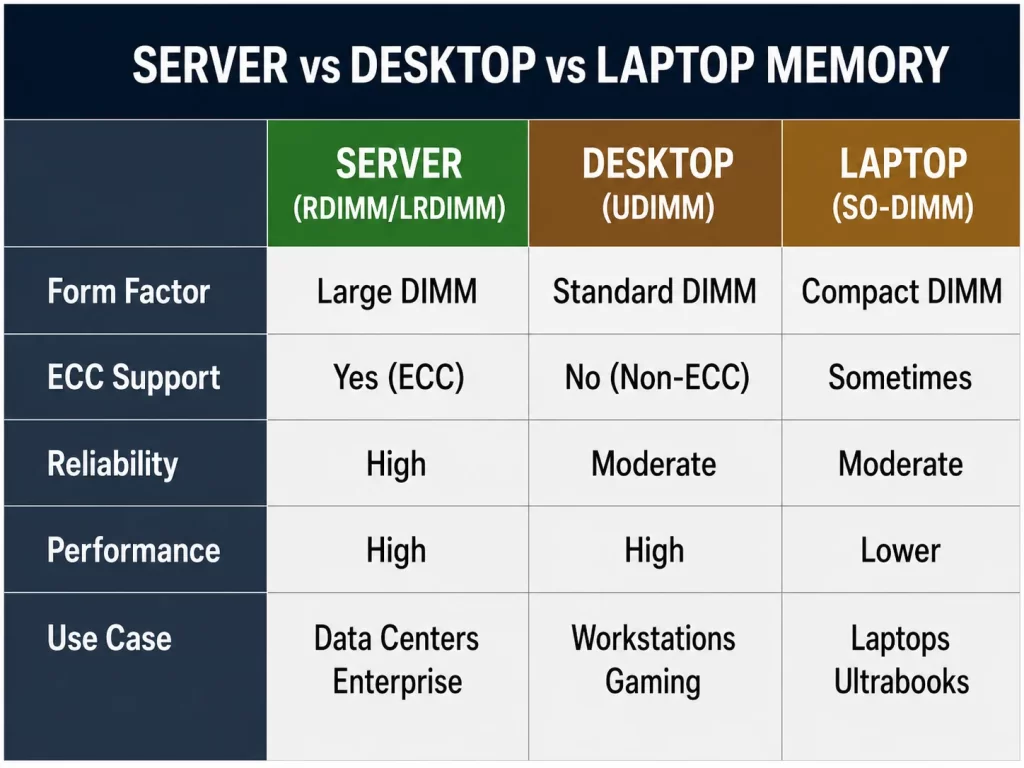

Server vs Desktop vs Laptop Memory: Key Differences

Understanding the difference between memory types explains why shortages impact businesses differently.

1. Server Memory (Enterprise RAM)

Key Features:

- ECC (Error-Correcting Code)

- RDIMM / LRDIMM architecture

- Designed for stability and uptime

Use Cases:

- Data centers

- Virtualization environments

- AI workloads

2. Desktop (Consumer) Memory

Key Features:

- Non-ECC (most cases)

- Lower cost

- Performance-focused but less stable

Use Cases:

- Gaming

- Workstations

- General computing

3. Laptop Memory

Key Features:

- SO-DIMM form factor

- Lower power consumption

- Limited upgrade capacity

Server memory is built for reliability and scale, while desktop and laptop memory prioritize cost and consumer performance.

Why Server Memory Is Hit the Hardest

AI infrastructure primarily relies on server-grade memory, not consumer RAM.

Key Reasons:

- Data centers require ECC stability

- AI systems demand large memory pools

- Enterprise environments scale memory massively

This concentrates demand specifically on server memory — driving shortages and price increases.

The Rise of High Bandwidth Memory (HBM)

HBM is a newer type of memory used in AI systems.

Key Advantages:

• Extremely fast data transfer

• Closer integration with GPUs

• Ideal for machine learning

Impact on Market:

Manufacturers are shifting production from:

- DDR4 / DDR5

- Toward HBM

HBM is reducing supply of traditional server memory because manufacturers prioritize higher-profit AI memory products.

Where Memory Is Headed (2026–2030 Outlook)

Short-Term (1–2 Years)

- Continued shortages of DDR4 and DDR5

- High resale value for used server memory

- Increased reliance on secondary markets

Mid-Term (3–5 Years)

- New semiconductor fabs begin production

- Memory supply stabilizes

- Prices begin to normalize

Long-Term Trends

1. AI Will Permanently Increase Demand

Memory requirements will not return to pre-AI levels.

2. Higher Capacity Modules Become Standard

128GB+ DIMMs will become more common.

3. DDR6 Development

Next-generation memory is already in early stages.

4. Hybrid Memory Architectures

Combining different memory types for performance optimization.

What This Means for Businesses

Businesses should expect higher memory costs, longer lead times, and increased value for existing hardware.

Key Takeaway

The memory shortage is not temporary — it’s a structural shift driven by AI.

This creates a rare opportunity:

Your existing server memory is more valuable than ever